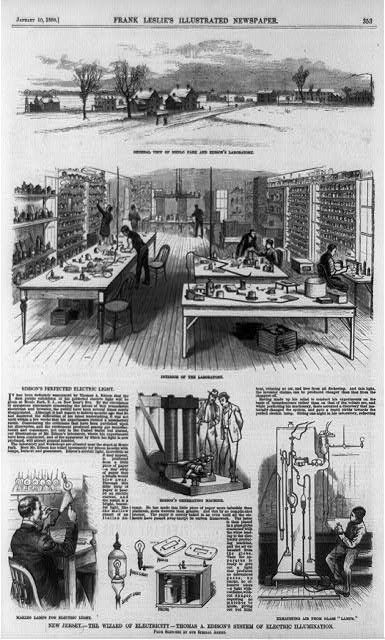

Ever wondered why it was apollo 11 that landed on the moon and not apollo 1? after all this was the first time humans had attempted to land on the moon.

This project had unlimited funds and so the Apollo team were able to run the project in incremental stages and then iterate, in other words the lean approach by testing and learning from each stage before moving onto the next stage towards the journey to ultimately land on the moon.

Of course most big projects do not have unlimited funding like this. Cost is prohibitive, Building a skyscraper or hosting the Olympic games on the other hand would just be too costly. Building a skyscraper multiple times before discovering the correct way would blow the contruction budget. Hosting mock Olympic games would likewise make the whole endeavour too costly for cities to host.

Luckily this approach works well in software where it’s relatively cheap to iterate and try out ideas and then change what has been built and try again. Unfortunately is still far too common to see software projects as contruction projects where this discovery phase is completely missed.

Inspired by the book How Big Things Get Done: The Surprising Factors That Determine the Fate of Every Project, from Home Renovations to Space Exploration and Everything in Between by Bent Flyvbjerg and Dan Gardner